Since leaving Epic, I’ve had the opportunity to revisit a lot of the tools I use for programming. Here are some thoughts on the tools I’ve been using:

CoffeeScript

This is a radical change after working in C++/HLSL for so long. CoffeeScript is just a syntax layer on top of JavaScript, so the trade offs are similar: dynamically typed, and runs everywhere. However, it fixes a few problems of JavaScript: it disallows global variables, and it creates lexical scopes for variables inside if/for blocks.

It’s also a significant white-space language, which is blasphemy to a C++ programmer, but using it has solidified my opinion that it’s The Right Way. {} languages (C++, Java, etc) all require indentation conventions to make the code readable; because when a human looks at the code we see the indentation rather than the curly braces. Significant indentation matches up our visual intuition with the compiler’s semantics.

It also doesn’t distinguish statements from expressions, and generally encourages writing functional-style code. Code blocks may contain multiple expressions, but “return” the value of their last expression. Loops are like comprehensions in Haskell; they yield an array with a value for each iteration of the loop, and if/else yields the value of the branch taken.

Lastly, it has “string interpolation”, which is a funny way to say that you can embed expressions in strings:

"Here is the value of i: #{i}"

This turns into string concatenation under the covers, but it’s nicer than writing it explicitly, and is less error-prone than C printf or .Net string.Format.

NodeJS

This comes along with CoffeeScript (as the compiler host), but is an interesting project on its own: it’s a framework for people using JavaScript to write servers (or other non-browser based JavaScript application).

My game developer instincts say that using JavaScript, a hopelessly single-threaded language, to write server code won’t scale. But using threads for concurrency when developing scalable servers is a distraction; you have to scale to multiple processes, so that may as well be your primary approach to concurrency. The efficiency of any single process is a constant factor on operating costs, but many web applications will simply not work at scale without multi-process concurrency.

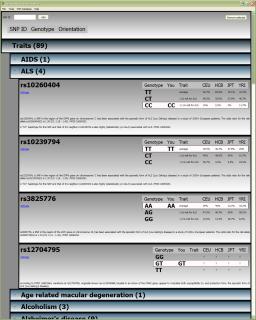

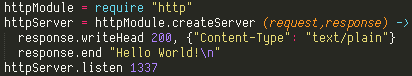

NodeJS also benefits from the lack of a standard I/O API for JavaScript by starting with a clean slate. The default paradigm is asynchronous I/O, which is crucial in a single-threaded server. JavaScript makes this asynchronous code easier to write with lexical closures, and CoffeeScript makes it easier with significant indentation and concise lambda functions. Here is the Hello World example from the NodeJS website written in CoffeeScript:

Node.JS also takes advantage of dynamic typing (and again, a clean slate) to form modules from JavaScript objects. In the above code, http is a NodeJS standard module, but you use the same module system to split your program up into multiple source files. In each source file, there is an implicit exports object; you can add members to it, and any other file that “requires” your file will get the exports object as the result.

Sublime Text

After a long time assuming that none of the standalone text editors were worth abandoning Visual Studio for, I was forced to try Sublime Text while in search of CoffeeScript syntax highlighting. I haven’t looked back. It is responsive, pretty, and has a unique and useful multiple selection feature.

You can use it for free with occasional registration nags, but if you do, I’m sure that you will agree that it’s worth the price ($60).

Dina font

This is a font is designed for programmers; all the symbols look nice, and i/I/l/1 are all distinct. The CoffeeScript/NodeJS example above uses it. I’ve been using this font for years, though I had to abandon it when Visual Studio 2010+ removed support for bitmap fonts. Now that I’m using Sublime Text, I can use it again.

The argument for a bitmap font is that our displays aren’t high enough resolution to render small vector fonts well. TTF rendering ends up with lots of special rules for snapping curves to pixels, and Windows uses ClearType to get just a little extra horizontal resolution. It’s simpler to just use a bitmap font that is mapped 1:1 pixels. This will all change once we have “retina” resolution desktop monitors; 200 DPI should be enough to render really nice vector fonts on a desktop monitor.

As a sidenote, Apple has always tried to render fonts faithfully to how they would look printed, without snapping the curves to make them sharper on relatively low DPI displays. The text in MacOS/iOS looks a little softer than Windows as a result. That weakness is solved by high DPI displays; I’m waiting for my 30″ retina LCD, Apple!

Git

My plan was initially to use Perforce; I even went to the trouble of setting up a Perforce server with automated online backup on a Linux VM (and believe me, that is trouble for somebody who isn’t a Linux guy). But after going to that trouble, I had to go visit my family in Iowa on short notice, and wasn’t able to get a VPN server running before I left. While I was in Iowa, I wrote enough code that I decided that I needed revision control enough to use Git temporarily until I got home. And it’s not great, but it works.

One interesting aspect of it is that editing is not an explicit source control action. Instead, Git tries to detect changes you’ve made to your local files. Committing those changes is still explicit, but in the UI I’m using (GitHub) files you’ve modified just automatically show up in the list of files you may commit. A nice side-effect of this is that it can detect renames (and theoretically copies, although I haven’t gotten that working). So you can move files around in Windows Explorer, and Git will notice that the contents are the same (or even mostly the same), and track it as a rename instead of a delete/add.

I’ve been using the GitHub frontend for Windows, but it’s very basic. The UI is pretty, but doesn’t expose a lot of the functionality of Git. It’s still a better workflow than using the command-line for seeing what your local changes are and committing, but there’s no way to see the revision history for a file or other things that I did all the time in the Perforce UI (as ugly as it was).

But it works well enough for my purposes that I have stuck with it after my trip to Iowa. I have my repository in my Dropbox, and so it’s transparently backed up and synced between my laptop and PCs. At some point, I will have to add a server component, but as long as I’m working by myself, Git works just fine without a server.